Machine learning of an 18th century hand: transcribing the essays of George III

Chris Olver, Metadata Creator, Georgian Papers Programme, King’s College London

One of the major undertakings by King’s College London, Omohundro Institute and William & Mary College is to transcribe the digital records being created at the Royal Archives. The reason behind this focus on transcription by the GPP partners cannot be overstated: transcribed texts can improve the discoverability of digital records through searching the keywords of transcripts and also providing a corpus of words that can be analysed for index terms which provides greater granularity than archive catalogue entries. Manual diplomatic transcription is still the gold standard in terms of accuracy however it is highly skilled and labour intensive. With the increasing sophistication of optical character software, it was my brief to look into whether there was any available software which could be used as an automatic or semi-automatic alternative. As luck would have it, the European Union funded project, Transcriptorium (2013-2015), had created the open source transcription platform, Transkribus, as part of its project outputs. The Transkribus platform developed and maintained by the University of Innsbruck includes number of tools developed by various European partners allowing for the creation of a handwritten text recognition (HTR) engine that can decipher historical handwritten documents and automatically generate transcripts. The platform had already showed that it was capable of demonstrating good results from English 18th century handwritten sources in case of the papers of Jeremy Bentham and so it was decided to test out the system.

Model-building

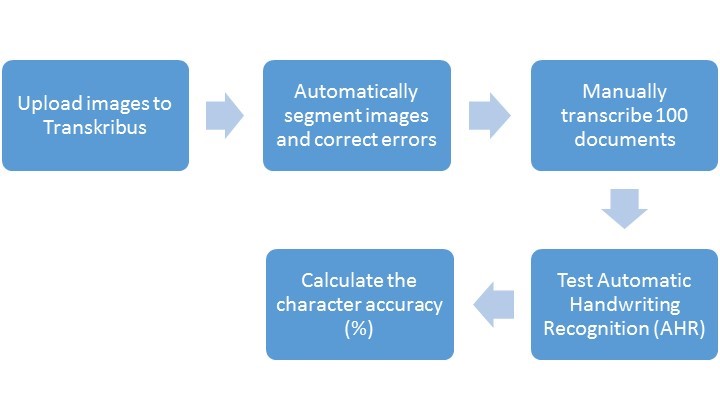

The initial aim was to test how effective the handwritten text recognition (HTR) engine would be with papers from the Royal Archives. It was decided to concentrate on the essays of King George III as these were the first collection to be digitised by the Royal Archives and were available and a HTR model based on King George III would be potentially the most useful engine to create as the majority of the papers in Georgian Papers Programme related to his reign. The Royal Archives kindly provided us with initial JPEGS of the essays to begin the testing. In order to achieve automatic transcription it is first necessary to build the HTR engine. For this transcribed copy need to be created as a basis of the model so the initial model was based on 100 manually transcribed pages [see diagram for Transkribus workflow]. The initial results showed promise: the best individual result showed a 43% Word Error Rate and 30.2% Character Error Rate with the first model. Whilst these results were poor compared to the accuracy of manual transcription, it showed the potential of the service once the model had been refined and more text could be added to the model.

The refined model for George III hand was developed in July 2016, whilst the model showed clear advances from the first model the results were surprisingly worse than the original. The reason that lay behind this was that the model for the most part was built on fair clearly written essays by George III and the testing was carried out on more visually complicated texts with multiple corrections, abbreviations and unclear text. The experience showed that in order to build a robust model it is necessary to provide better transcription and more examples to improve the model’s performance. In a recent paper by Melinda Jander on using Transkribus to create an HTR engine for the correspondence of the Brothers Grimm, she noted the need to capture all interline additions and to include all text features in tagging, notably expanded abbreviations, to help improve the model[1]. The George III model therefore will require further revision of its core transcription (or what is referred to as ‘ground truth’) as well as ingesting more material to improve the number of recognisable words (or tokens).

Whilst these results may seem somewhat disappointing, one of the major plus points of using Transkribus is how it is an ongoing project that is being constantly improved and is well supported by the Transkribus team. In recent months, they have begun testing the Recurrent Neural Networks version of the HTR models which were previously based on Hidden Markov models. The results are not only faster but far more accurate as the model also refers to a stipulated local language dictionary to help character recognition [see table below]. Although these results are only a small sample from a clear handwritten essay, with the best results showing model at its best obtained 91% accuracy in terms of character recognition (p30: CER: 10.51).

| HTR model | File | Page Number | Word Error Rate (WER) | Character Error Rate (CER) |

| GEO_1-3_v2 | RA_GEO_22 | 30 | 34.85 | 10.51 |

| 31 | 52.52 | 25.44 | ||

| 32 | 47.14 | 21.32 | ||

| 33 | 42.22 | 16.82 | ||

| 34 | 37.21 | 12.96 | ||

| 35 | 47.16 | 17.29 | ||

| 36 | 39.13 | 20.54 | ||

| 37 | 46.18 | 28.41 | ||

| 38 | 53 | 31.03 | ||

| 39 | 37.13 | 15.09 | ||

| 40 | 48.78 | 21.62 |

The current work being undertaken on Transkribus would not be possible if it was not for the Royal Archives kindly providing source material for the transcription projects. The ability for Transkribus to be a platform for crowd-transcription has also boosted the work considerably as it has allowed colleagues from the William & Mary College to greatly accelerate transcription work whilst also testing their own HTR model for Queen Charlotte’s diaries.

Conclusion

Overall, the initial six month’s using Transkribus has been hugely encouraging in terms of the results that are now being obtained with the George III model. The process of preparing the images for transcription can still be somewhat tricky and time consuming and results still very much depend on the original quality of the document but overall the programme has the capacity to deliver good quality handwritten transcription. What is even more encouraging is the new tools that are being developed for the platform which include the ability to recognise and transcribe tables and export the results into Excel; forensic tools that will allow users’ to identify authors through samples of their handwritten and the ability to search entire collections for keywords.

[1] Jander, M. (2016) Handwritten Text Recognition – Transkribus: A User Report,

eTRAP Research Group, Institute of Computer Science, University of Gottingen, Ger- ¨

many, 2 November. Available at: http://www.etrap.eu/academic-output/.

[…] transcribing the materials they encounter, but the Omohundro Institute is also experimenting with machine-learning transcription tools and is considering crowdsourcing transcriptions. Fellows and others involved in the project also […]