Workshop Reflections: Early Modern Collection Catalogues, British Museum

Samantha Callaghan, Metadata Analyst, King’s Digital Laboratory

Entrance to the British Museum. Flickr user: Tom Flemming, 2018. Reproduced under Creative Commons Licence CC BY-NC

Early Modern Collection Catalogues: Open Questions, Digital Approaches, Future Directions was a workshop held at the British Museum, 15-16 February 2018, and intended to outline and discuss some of the issues that the Enlightenment Architectures: Sir Hans Sloane’s Catalogues of his Collection research team had encountered during their work. To that end, the team invited an international selection of experienced people working with similar types of digital projects or projects in the same time period to respond to the team’s presentations. Many respondees also had the opportunity to present on their own projects/approaches.

It was a congenial two days to meet and discuss, sometimes with much fervour, issues that are common across much of the work undertaken in digital spaces of the Enlightenment period. Below is a mix of reportage on particular sessions, reflections on how the work of the Georgian Papers Programme (GPP) complements or diverges from some standpoints taken by speakers, as well as a broader reflection on the greater digital project landscape in which the GPP sits. Some recurring themes from the workshop included: gaps within the Linked Open Data network for Eighteenth century projects, audiences and models, and workflows.

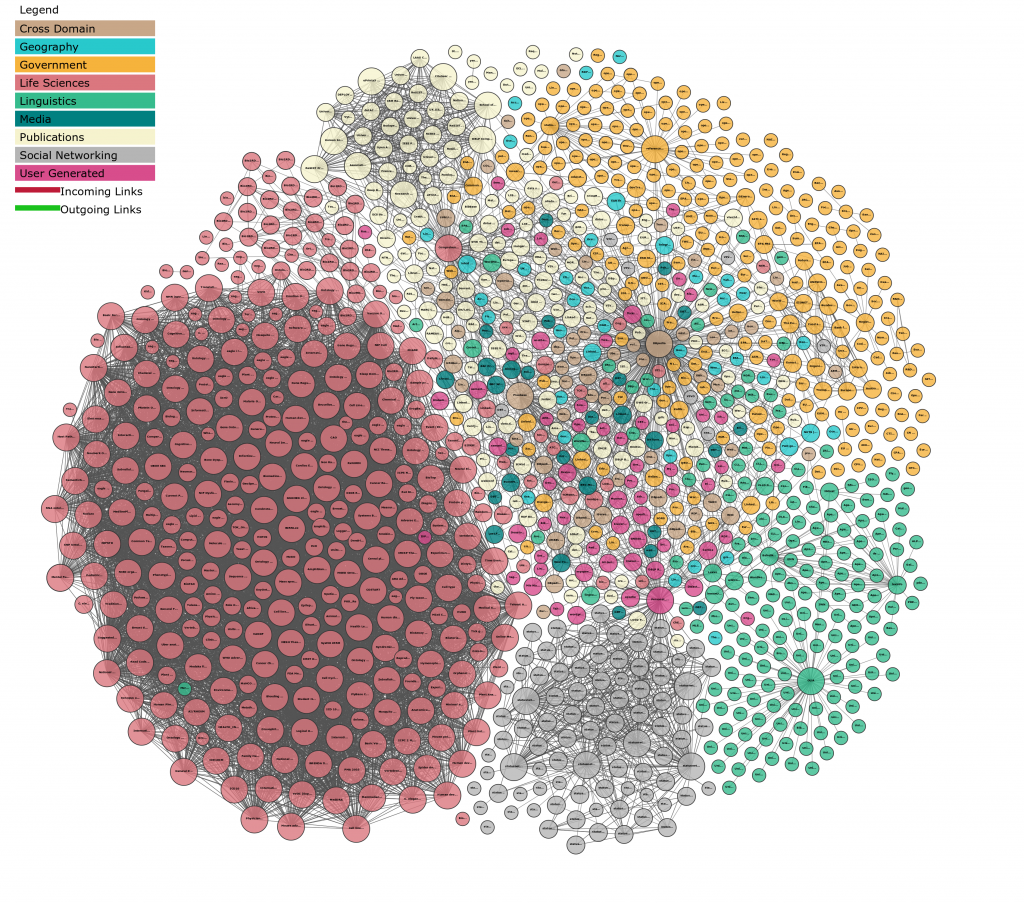

Gaps within the Linked Open Data Network

The British Museum has its own name authority file which contains tens of thousands of entities which could cover the time period in question; the GPP has access to a number of lists (Robert Bucholz’ Office Holders in Modern Britain, and Royal Archive salary lists and other household records digitised through Find My Past) that contain thousands of names that do the same. However the way this data is structured and the formats in which it is kept all affect the accessibility of these entity lists within the context of the projects to which they can be attached let alone beyond the projects and institutions that host them.

Despite the availability of the useful lists of personal names outlined above, they are dispersed across projects and there seems to be a dearth of easily accessible, digitally available name authority files to which our various projects can point with a high level of matching. VIAF is a name authority file and linked data service frequently used by digital projects due to its size and coverage, and was included in the preliminary information architecture for the Georgian Papers Programme (GPP). However, since VIAF is derived from the bibliographic records of predominantly national library catalogues of several countries, there is low coverage for unpublished creators of which a great deal of our material creators are. For instance, for the 66 name authority file records created for a proof of concept platform for the GPP, only 34 of them matched to records in VIAF.

SNAC or the Social Networks and Archival Context project is a relatively new linked data name authority file that might better suit as a source to which archival digital projects might link. It derives from entity records created with a particular metadata standard, EAC-CPF, that is widely used in archives and other heritage institutions. For the GPP entities (referenced above) there were 42 matches. “However, many of the persons, organizations, and families in the Prototype History Research tool [of SNAC] are weakly identified, which is to say, the available evidence does not establish a strong, reliable identity.”¹

Linking Open Data cloud diagram 2017. Andrejs Abele, John P. McCrae, Paul Buitelaar, Anja Jentzsch and Richard Cyganiak. Reproduced under Creative Commons Licence CC BY-SA

Katherine McDonough sparked an engaging discussion about geographic names and gazetteers with her response to a session regarding authority files (see below) . A gazetteer is another type of authority file that is often used in digitisation and digital humanities projects. Incorporating the use of a gazetteer allows for consistent geographical name usage across your data (name authority control) and often allow your users to view a map showing where particular places are situated. One of the most commonly used gazetteer is GeoNames; it’s one of the largest, its coverage is broad (global), it is in wiki form so can be added to or edited easily, and it is a multilingual resource (see Istanbul in the ‘Alternate Names’ tab for names in different languages and historical names also). For projects with a historical focus, however, it has limitations. It does not have the ability to deal with location boundaries changing over time, changing hierarchy (for example places that changed national affiliation as country boundaries moved) or granularity, in terms of houses, properties or streets that no longer exist.

When gathering user feedback for the GPP proof of concept mentioned above, which used GeoNames as its geographical name authority, one respondent asked, “is there not [a] better alternative?” The answer to that is not really. There is no dedicated global gazetteer that covers the time period in question; for the GPP this is problematic since the papers cover the British Empire and its allies (and enemies) as they existed during the Hanoverian period and the Enlightenment Architectures project is likely to suffer the same issue since Sloane’s collections are drawn from across the world. Many of the resources mentioned at the workshop discussions that might cover our time period, such as the Map of Early Modern London or the Historical Gazetteer of England’s Place Names, are generally region specific and so on which echoes the same problem of coverage.

There is a project that may be looking to do something in this area, the World Historical Gazetteer, but it is relatively new and may offer little help at this stage to other projects that have a limited amount of time to run.

Geographic name authority control is a known, and knotty, problem. It was discussed a number of times at the workshop, and despite its limitations, many projects of similar types to the GPP and Enlightenment Architectures make use of GeoNames and/or Wikipedia/Wikidata for what functionality they do offer.

These gaps highlight, perhaps, possible opportunities afforded to those working within this time period. Early Modern/Eighteenth century scholars have the chance to develop or contribute to projects such as the World Historical Gazetteer and/or Pelagios Commons. Another example of an entity authority file, but for ancient people and places, is Pleiades which could provide a model of developing something similar for the time period/s of interest.

Audiences and Models

The Enlightenment Architectures project is, as part of their funding requirements, to publish four scholarly articles drawn from their work with Sloane’s catalogues. However, as they are digital humanities scholars, they know that making the huge amount of markup work and the transcriptions of the catalogues as a dataset available to other digital humanities folk is a very useful and welcome thing to do and thus this is an additional intended output.

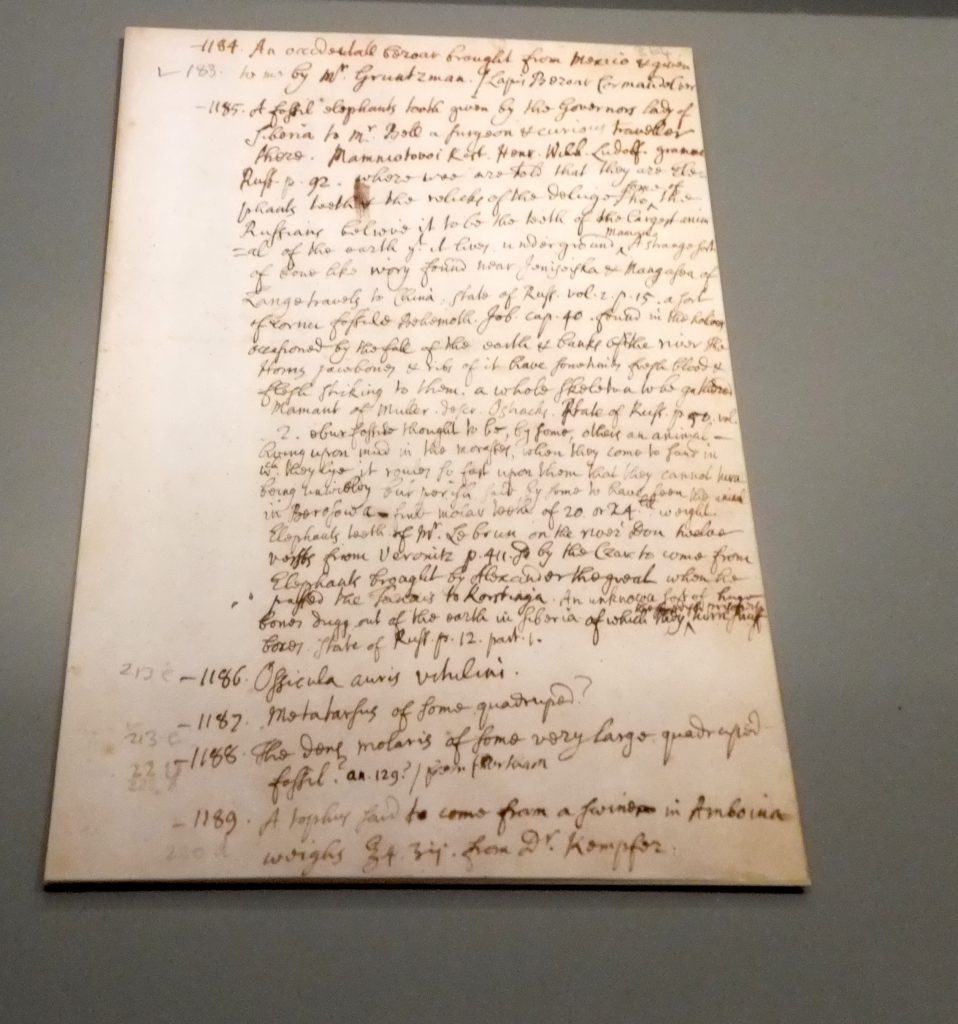

The project highlights questions around audiences and the types of metadata models you use to define your data. Who are your primary users? Who are your secondary (tertiary, quaternary…) users? Are they likely to change over time? Do you know what their information needs are? How are these needs captured? And incorporated into the building of your data models? What types of metadata schema do you use to structure your models to effectively meet user needs? And, in the end, how do you make the models you’ve used and the data you’ve captured available to your audience/s? In the case of the Enlightenment Architectures project they are currently making use of TEI XML, that they have extended through the introduction of their own elements.

A Page from one of Han Sloane’s Catalogues. Flickr user: lebatihem, 2017. Reproduced under Creative Commons Licence CC BY-NC-ND

A session (Approach to objects and catalogue entries and how to encode them) presented by Victoria Pickering and Alexandra Ortolya-Baird, post-doctoral research assistants on the Enlightenment Architectures project, elicited a number of responses from Kalliopi Zervanou, one of the attendees who was invited to directly respond to their presentation. Kalliopi suggested that their approach, which encompasses capturing aspects of item descriptions (such as colour) as well as the layout of text on the page (through the use of line break tags and the ‘rend’ attribute) meant that the encoded text they are creating may not easily lend itself to computation analysis. This may partly be ameliorated through regularisation but highlights that what may be of use to the project team in their work may present barriers to use in the dataset they produce. Especially as they have extended the TEI, an XML schema, that has been used to encode the transcribed catalogues through the creation of their own elements. However, if the intent is also to make this work available through a publicly accessible website then the inclusion of layout tagging could be very useful.

Workflows

Discussion around workflows arose regularly over the course of the workshop, prompted significantly in a session on day one about authority files given by Julianne Nyhan, Deborah Leem and Andreas Vlachidis. Topics that came up included:

- Hand (manual addition of encoding) vs. automation (NER, NLP etc)

- Authority vs. crowdsourcing particularly in relation to sources for context. One attendee commented that the Enlightenment itself constituted a form of crowdsourcing

- The retention of derogatory terms rather than sanitising the material

This last point is particularly appropriate given the time period in which this material was generated. It is important to not only include a readily accessible policy about their retention, but also provide context around their usage, especially if the texts are to be made publicly available.

Window and view, Old Royal Palace, Prague Castle. Sean Pike, 2015. Reproduced under Creative Commons Licence CC BY-NC-SA

Michael Sperberg-McQueen, an authority on TEI and XML in general, gave an informative presentation on day two on the issue of what he called bifocality and approaches to deal with it. He used a window as a metaphor to describe the issue, that is, ‘looking at the window’ and ‘looking through the window.’ In the context of the Enlightenment Architectures project this could relate to two ‘views’; the descriptions of items (the window) and the items they describe (the view through the window). He described how, in other projects, multiple layers of description might be connected and described effectively using a variety markup approaches. Michael also reinforced that standards impact what you can do and how you think about content and that there can be differing levels of description – physical, rhetorical, several layers of linguistic meaning – that can be captured.

This is only a sample of the topics with which we engaged over the full two days. It was extremely worthwhile from my perspective undertaking metadata work for the GPP. The workshop highlighted our shared challenges, provided several avenues of discussion to come to grips with these, but also allowed us to reflect that there are a multiplicity of ways to approach our projects.